Multi-agent AI systems: when they help and when they complicate things

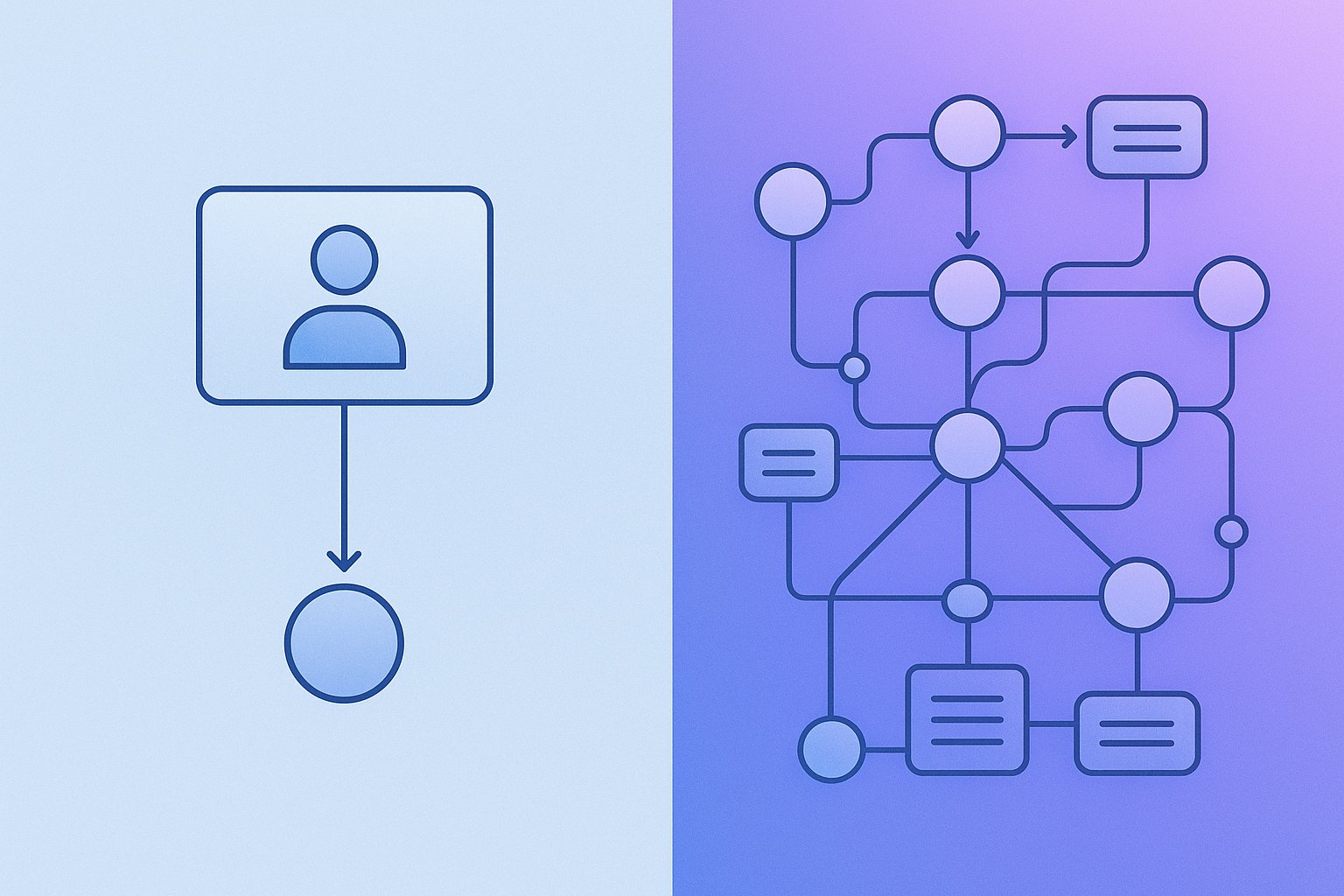

Multi-agent AI systems sound like the natural next step after single agents. The thing is, not every problem benefits from adding more “roles,” orchestration, and communication between components. Find out when a multi-agent architecture makes sense and when it’s better to choose a simpler setup.

Multi-agentism is not a goal in itself

A lot of expectations have built up around multi-agent systems. The vision is tempting: one agent plans, another gathers data, a third writes code, a fourth reviews, and a fifth watches over compliance and security. It looks great on a diagram. It looks great in a demo too. The problem starts when you have to maintain, monitor, and justify such a setup to the business.

For system architects, CTOs, and R&D teams, the key question today is not: can a multi-agent system be built? It can. The more practical question is: in a given case, does the benefit of splitting responsibilities outweigh the cost of complexity?

Because multi-agentism is not just more “intelligence.” It also means:

- more intermediate states,

- more points of failure,

- higher token and infrastructure costs,

- harder debugging,

- a larger risk surface when integrating with tools.

So if you’re considering this architecture model, it’s worth setting marketing promises aside and looking at the topic like an engineer: through the lens of tasks, constraints, and operational reality.

What a multi-agent system actually is

In practice, we’re talking about a system in which several AI agents pursue a shared goal, but with divided roles, context, or responsibilities. That division can take different forms.

The most common patterns are:

- planner-executor – one agent plans the steps, another executes them,

- specialized agents – each agent is responsible for a different domain, e.g. law, finance, support, code,

- critic / reviewer loop – one agent generates an output, another evaluates and improves it,

- router + specialists – a higher-level agent routes the task to the right specialist,

- society / debate pattern – several agents propose solutions and the system selects the best one.

This distinction matters, because not every system with multiple prompts is automatically a sensible multi-agent architecture. Sometimes it’s just a sequence of steps that could just as well be handled by a single agent with a well-designed workflow.

When multi-agent AI really makes sense

There are scenarios where splitting work across agents brings real value. Not just a “cool experiment,” but an advantage in quality, scalability, or safety.

1. When the problem is naturally divisible into roles

If a task resembles teamwork among people, multi-agentism can make sense. A good example is complex analytical processes:

- one agent gathers data from multiple sources,

- another normalizes and assesses data quality,

- another builds conclusions,

- another prepares a report for a specific audience.

In such a setup, the roles are clear and the interface between them can be described. That’s important. When responsibility boundaries are blurry, the system starts to resemble a meeting without an agenda. Everyone says something, but no one knows who is responsible for what.

2. When you need context isolation

A single agent with a very broad context can be less predictable than several smaller, specialized components. Context isolation helps when:

- different parts of the task operate on different data sources,

- you want to limit exposure of sensitive information,

- you need precise tool-access policies,

- a single prompt becomes too long and hard to maintain.

Example: an agent responsible for legal document analysis does not need access to the ticketing system, and an agent for prioritizing requests should not see all contracts. In a multi-agent architecture, this is easier to separate.

3. When you need mutual control

A single agent can be fast, but it can also be too confident. A generator + critic or executor + validator setup can significantly improve answer quality in tasks where the following matter:

- compliance with procedure,

- completeness of the answer,

- detecting contradictions,

- reducing hallucinations,

- controlling output format and quality.

This is especially useful in regulated, technical, or high-risk areas. Of course, it does not guarantee correctness. But it does provide an additional layer of checking that can be measured and improved.

4. When the process requires many tools and integrations

The more tools, APIs, and data sources you have, the more sense it makes to separate agents responsible for specific operations. A single agent may handle a simple tool choice well. But if the system needs to use:

- document search,

- knowledge bases,

- ERP or CRM systems,

- code repositories,

- operational tools,

- validation and audit mechanisms,

then specialization usually improves overall control.

From an architect’s perspective, this is not just about answer quality. It’s also about clearer permissions, easier call logging, and more predictable system behavior.

5. When you want to optimize cost and model per role

This is often underestimated. Not every agent has to run on the same model. A planner can use a stronger reasoning model, while an execution agent can use a simpler and cheaper one. A classification agent can operate almost deterministically, while a report-synthesis agent may need more flexibility.

In a well-designed multi-agent system, you can optimize:

- inference cost,

- latency,

- quality level at each process stage,

- retry and fallback policies.

At that point, it’s no longer a game of “more agents,” but deliberate pipeline design.

When multi-agentism is overengineering

Now for the less romantic part. A lot of implementations do not need several agents. They need a better design for one agent, a solid workflow, and sensible tool integration.

1. When the problem can be described as a simple pipeline

If the task looks like this:

- fetch data,

- filter it,

- generate an answer,

- return the result,

then you often do not need a society of agents. You need a well-designed process. Many teams build a multi-agent architecture where a single agent would be enough with:

- a controlled system prompt,

- explicitly defined tools,

- simple output validation,

- memory limited to the specific task.

The difference? Fewer moving parts, fewer logs to analyze, fewer surprises.

2. When you still can’t measure the quality of a single agent

This is a common R&D mistake. The team does not yet have stable metrics for the simple solution, but it already moves on to multi-agent orchestration. The result is predictable: it’s unclear whether improvement or degradation comes from the model, the prompt, routing, the planner, the tool, or even the order of steps.

If you don’t have answers to questions like:

- what the quality baseline is,

- where exactly errors occur,

- which task types fail,

- how much a single run costs,

- what the acceptable latency budget is,

then multi-agentism will most likely only mask the problems.

3. When the domain requires determinism more than “intelligence”

There are processes where classic software with a bit of AI works best, not an agent system. For example:

- hard business rules,

- formal validations,

- routing based on simple criteria,

- ETL tasks,

- predictable document transformations.

If most of the logic can be written as rules, do that. The agent should support areas of uncertainty, not replace everything indiscriminately.

4. When the cost of communication between agents eats up the benefit

Every exchange between agents costs tokens, time, state-tracking complexity, and the risk of losing context. In small tasks, this overhead can be greater than the value of specialization.

On paper it looks harmless: the planner sends a brief, the specialist responds, the reviewer comments, the coordinator merges the result. In practice, this turns into 8–12 model calls where one or two would have been enough.

If the system runs slower, costs more, and only delivers marginally better results, the architectural decision is fairly clear.

Typical pitfalls in multi-agent system design

Even where multi-agentism makes sense, it’s easy to fall into a few classic problems.

Unclear contracts between agents

“Agent A will pass the necessary context to agent B” sounds good until you have to debug production. Every agent should have clearly defined:

- input,

- expected data format,

- scope of responsibility,

- allowed tools,

- completion conditions,

- error policy.

Without that, communication between agents quickly turns into soft, prompt-based chaos.

Lack of central observability

If you can’t see the full task flow, you don’t have a production system — you have an experiment. You need to track:

- which agent made which decision,

- which tool was called,

- based on what context,

- how long each stage took,

- where the error occurred,

- what the execution cost was.

In multi-agent systems, observability is not an add-on. It’s a survival requirement.

Tool permissions that are too broad

An agent that “for convenience” has access to everything is no longer a controllable component. In production environments, you need to think like you would about distributed systems and security-by-design:

- least privilege,

- tool separation by role,

- input parameter validation,

- operation auditing,

- safe fallbacks.

This is especially important with tool servers and agent communication protocols.

Where MCP and the tool layer fit in

In practice, many multi-agent systems do not fail because of the model itself, but because of the tool layer: the way functions, data, and actions are exposed to agents. And that’s where the real engineering begins.

If agents are to work sensibly, they need stable, secure, and well-described interfaces to the outside world. MCP servers can be a very strong foundation here, because they organize the way models and agents use tools, resources, and context.

For CTOs and architects, that means several benefits at once:

- more predictable integrations,

- better separation of responsibilities,

- easier management of tool access,

- simpler scaling of the agent ecosystem,

- greater control over security and operability.

If a team wants to build agents not just “so it works in a demo,” but in a way that can be maintained in production, it’s worth going deeper into MCP server design.

A course that makes a lot of practical sense here

A good complement for teams designing an agent architecture is the course Best practices for writing MCP servers. It’s material for experienced developers who are not looking for generalities, but for specifics related to designing, implementing, securing, and operationalizing MCP servers.

Why does this make sense specifically for system architects, CTOs, and R&D teams?

Because in multi-agent systems, the advantage rarely comes from the “clever prompt” itself. More often, the winning team is the one that designs the tool layer, communication contracts, and security mechanisms better. This course helps explain how to build MCP servers so they are ready to work with AI agents, LLM applications, and RAG systems — exactly where multi-agentism most often enters the picture.

In short: if you’re planning agents but don’t have solid MCP foundations, it’s a bit like designing a drone fleet without a sensible communications system. It will fly. The question is just: where to?

How to make the architectural decision

Instead of asking “should we have many agents?”, it’s better to go through a few concrete criteria.

Ask yourself these questions

Does the task have natural responsibility boundaries?

If not, it will probably be hard to split agents sensibly.

Does specialization improve quality in a measurable way?

Not “it seems so,” but improves on benchmarks, tests, and production data.

Is the orchestration cost acceptable?

Count tokens, latency, retries, monitoring, and maintenance.

Can the contracts between agents be clearly defined?

If the interfaces are vague, the system will be brittle.

Do you need different levels of permissions and isolation?

This is one of the strongest arguments for multi-agentism.

Do you have observability and evaluation?

Without them, you won’t be able to tell architecture from improvisation.

Simple rule: start with a single agent, then specialize

In many cases, a sensible path looks like this:

- build a working baseline with a single agent,

- measure quality, cost, and latency,

- identify bottlenecks,

- specialize only the elements that actually benefit from it,

- only then introduce multi-agent orchestration.

This approach has two advantages. First, it gives you a reference point. Second, it limits the temptation of premature complexity. And that, as we know, is very creative and usually shows up at the meeting uninvited.

Examples: when yes, when no

Yes: due diligence analysis with multiple sources

You have a process where legal documents, financial data, operational history, and compliance risks need to be analyzed. Each area requires different context, different tools, and a different validation method. Here multi-agentism makes sense, because specialization is natural and the quality-control layer genuinely adds value.

Yes: an advanced copilot for a development team

One agent plans the work, another analyzes the repository, a third runs diagnostic tools, and a fourth reviews the generated patch. If the whole system is based on well-designed tools and controlled permissions, such a setup can be effective.

No: a company FAQ with simple search

If the main problem is retrieval and a concise answer based on a knowledge base, a single agent or even a classic RAG pipeline is usually enough. Adding three specialists and an orchestrator will most often improve the slides, not the product.

No: simple classification processes

If the task is to assign a ticket to a category and team, first check a classifier, rules, and a simple workflow. Multi-agentism can be an elegant way to create a very complicated version of something that previously worked in 200 lines of code.

What to remember

Multi-agent AI systems make sense when the complexity of the problem is real, not imagined. When you need specialization, context isolation, quality control, different tool-access policies, and deliberate orchestration. They do not make sense when they are used to hide the lack of a proper baseline, weak evaluation, or reluctance to write simpler logic in ordinary code.

For technical teams, the most important thing today is not to build the most “agentic” system possible. It’s to build one that can be:

- measured,

- secured,

- maintained,

- developed without chaos,

- defended to the business and operations teams.

And if your architecture is going to rely on agents using external tools, data, and services, then investing in MCP-related skills quickly stops being a “later” option. It becomes part of the foundation.